Modern AI models are powerful—but they are also heavy, slow, and expensive to run.

Quantization is one of the most effective techniques used in real-world AI systems to solve this problem.

This blog explains quantization in very simple language, how it is done, its benefits, and the trade-offs you should know before using it.

What Is Quantization?

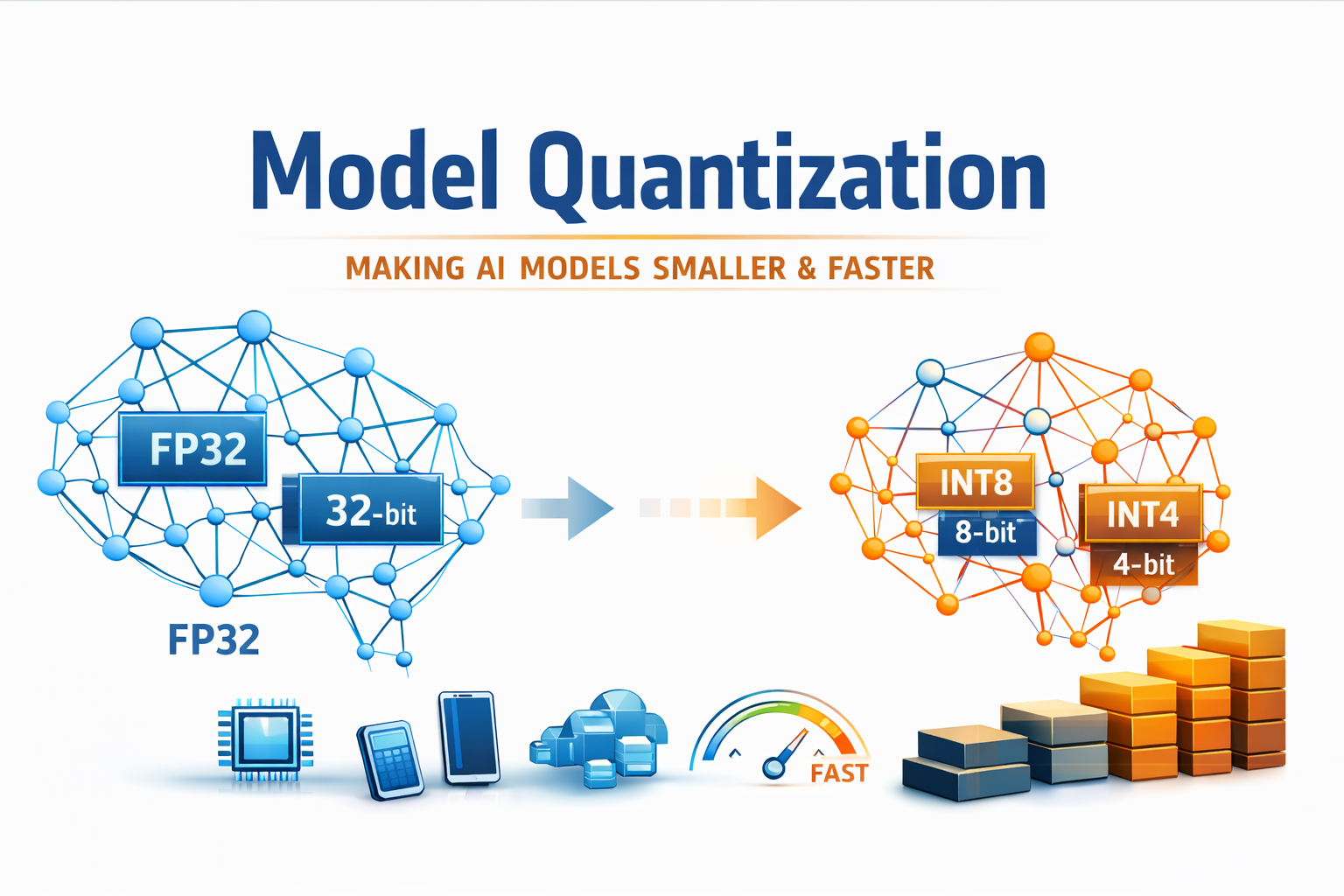

Quantization means using smaller numbers to run an AI model.

AI models store knowledge using numbers. Normally, these numbers are very precise and large. Quantization replaces them with smaller, simpler numbers that are “good enough” to do the job.

Think of it like this:

- A high-quality image uses more storage

- A compressed image looks almost the same but uses less space

Quantization does the same thing for AI models.

The model becomes lighter and faster, while mostly keeping the same intelligence.

Why Quantization Is Needed

Large AI models create several practical problems:

- They need powerful GPUs

- They consume a lot of memory

- They are slow on CPUs

- They are expensive to scale in production

Quantization helps by reducing the model’s size and computational needs, making it practical for real-world deployment.

How Model Quantization Is Done

There are multiple ways to quantize a model. The most common approaches are explained below.

1. Post-Training Quantization

This is the simplest and most widely used method.

The model is first trained normally using high precision numbers. After training, the model weights are converted into lower precision formats such as 8-bit or 4-bit.

Advantages

- Easy to apply

- No retraining required

- Fast deployment

Disadvantages

- Small drop in accuracy is possible

This method is commonly used for inference and production systems.

2. Quantization-Aware Training

In this approach, the model is trained while simulating low-precision arithmetic.

The model learns how to handle reduced precision during training itself.

Advantages

- Better accuracy after quantization

- More stable results

Disadvantages

- More complex training

- Longer training time

This method is used when accuracy is critical.

3. Dynamic Quantization

Here, the model weights are stored in full precision, but values are converted to lower precision during inference.

Advantages

- Simple to apply

- Works well on CPUs

Disadvantages

- Not as fast as fully quantized models

What Parts of a Model Are Quantized?

Typically, quantization applies to:

- Model weights

- Activations

- Sometimes attention cache (for large language models)

Common precision levels include:

- 8-bit (INT8)

- 4-bit (INT4)

- Lower than 4-bit in experimental setups

Benefits of Quantization

Smaller Model Size

Quantized models can be 4 to 8 times smaller, which reduces storage and memory usage.

Faster Inference

Lower precision arithmetic requires fewer computational resources, resulting in faster response times.

Lower Cost

Quantization reduces GPU memory usage and enables higher throughput, which directly lowers infrastructure costs.

Works on Edge and On-Prem Systems

Quantized models can run on:

- CPUs

- Laptops

- Mobile devices

- On-prem servers

This makes AI more accessible and scalable.

Trade-Offs of Quantization

Quantization is powerful, but it comes with compromises.

Accuracy Loss

Aggressive quantization can slightly reduce model accuracy. The impact depends on the task and precision level used.

Hardware Dependency

Not all hardware benefits equally from low-precision computation. Performance gains depend on CPU and GPU support.

Debugging Complexity

Lower numerical precision can introduce subtle issues that are harder to debug.

Not Ideal for Training

Quantization is mainly used for inference. Model training usually still requires higher precision.

When Should You Use Quantization?

You should use quantization if:

- You are deploying AI models

- Latency matters

- Infrastructure cost matters

- You are running models on CPUs or edge devices

You may avoid quantization if:

- You are doing research experiments

- You need maximum numerical precision

- You are training large models from scratch

Final Thoughts

Quantization is a key reason why large AI models can run efficiently in real-world systems.

It does not make models unintelligent.

It makes them practical, scalable, and affordable.

If you are building production AI systems, quantization is no longer optional—it is essential.